May 2023 was a major inflection point for artificial intelligence.

It wasn’t as obvious as the release of OpenAI’s ChatGPT — that happened on November 30, 2022.

But what happened this time last year was no less important.

In May 2023, Tesla released version 11.4.1 of its self-driving software (FSD).

To most, it didn’t mean much at all. Just another trivial numerical release of Tesla’s software… with what was expected to be incremental improvements.

With few exceptions, the software release didn’t get much attention at all.

Yet I was extremely excited.

I knew Tesla was on the cusp of dramatic improvements in its self-driving software.

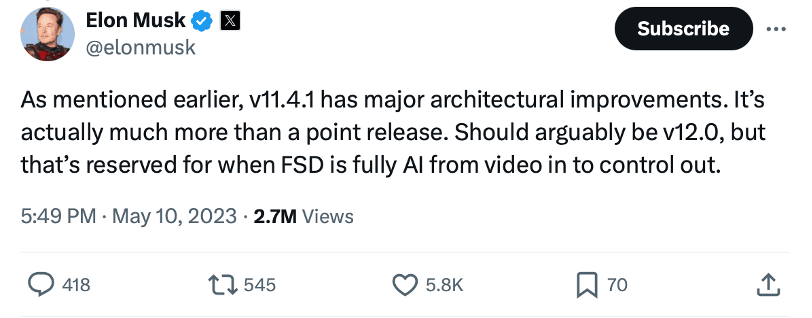

And Tesla CEO Elon Musk even gave the world a hint of what was happening…

He explained that the new software version had “major architectural improvements.”

It was more than just an incremental release of software — a “point” release.

He even suggested that it should be named differently, but chose to reserve the name change due to the nascent stage of the new architecture.

While Tesla was short on details, what was known is that Tesla basically scrapped its old software architecture over the preceding months. And it designed a new self-driving software built upon neural network technology.

Neural networks, a form of artificial intelligence, benefit from large data sets, from which they can then learn. The general rule of thumb is: the more data, the better.

What most don’t realize… is that the global network of owned and leased Teslas is, in fact, Tesla’s data collection network.

The collective fleet of Tesla sensors, cameras, and computing systems collect real world driving data… and send that information back to Tesla as training data for its self-driving software.

It was that data that enabled the architectural change referenced by Musk. Billions of miles driven by Teslas on autopilot and with earlier versions of its self-driving software.

And just think…

As we discussed in Outer Limits—“Your Robotaxi is Arriving”, each Tesla has 8 mounted cameras, including the cabin cam. These cameras don’t just “stare” at asphalt all day, logging mileage.

It’s estimated that each camera has a depth-of-field up to 250 meters and 360-degrees of visibility.

It reminds me of the CATPCHAs we often see online…

We use our human brains to help the CAPTCHA algorithms identify what is a stoplight from a tree. The pictures are often fuzzy or sometimes unusual. Humans are helping the software understand images that are less easy to understand.

Teslas use their 7 exterior cameras to “perceive” millions of objects every day, training the deep neural networks on the data. And they have been doing this for years.

Using a neural network has enabled Tesla to create something like a synthetic visual cortex.

And with the improved computational power, Tesla’s AI was able to ingest high-resolution video, not just images, from cameras located around the car, process that information in a similar way as a human brain, and infer the correct course of action in a way that optimizes safety and achieves the end goal of getting to a destination.

Release 11.4.1 was the beginning, the turning point, for what Tesla’s full self-driving (FSD) software has become today.

I wrote about the latest developments earlier this week, in Outer Limits — What in the World Has Tesla Done? This is required reading for Outer Limits readers.

For those of us tracking Tesla’s developments closely over the last several years, there have been quite a few hints along the way that have gotten us to this point.

In 2021, Tesla removed radar from its Model 3 and Model Y production. It did the same in 2022 for the Model S and Model X.

Also in 2022, it removed ultrasonic sensors for the Model 3 and Model Y, and again did the same in 2023 for the Model S and Model X.

And it’s worth mentioning that Tesla never used LIDAR technology in any of its designs, unlike Google’s Waymo, GM’s Cruise, Aptiv, and so many others. Tesla always saw the technology as an unnecessary expense to autonomous vehicles.

It was doing the opposite of every other player in the autonomous driving space. While all of the other players were loading their cars with all kinds of sensors and added expense, Tesla was throwing them away.

It went all in on vision, which was the first hint of a major architectural change built upon neural networks. It was a brilliant move that transformed Tesla’s leadership in autonomous driving.

And something similar is about to happen with another major name in artificial intelligence right now…

That company is OpenAI, the company behind the popular generative AI tool Chat-GPT.

And the transformation is happening with OpenAI’s large language models (LLMs).

On April 24th, a Stanford professor hosted the CEO of OpenAI, Sam Altman, on the Stanford campus. It was part of a series of events called Entrepreneurial Thought Leaders.

If anyone would like to watch the 45 minute discussion, it can be found here. But I’ll warn you, the discussion was very light and fluffy, and with very few details.

It was standing room only, with a long line and crowd for those that couldn’t get in.

Given the rapid developments of large language models (LLMs) — especially those that have come from the leadership of OpenAI — and the recent whispers of artificial general intelligence (AGI) on the horizon, it’s no surprise at all there was so much interest.

Yet I’m afraid it must have felt like a wet blanket to many in attendance.

Despite how uninteresting the discussion was, Altman did drop a few hints that stirred up excitement in the industry.

And he gave a clear indication that something major is about the change.

When discussing ChatGPT, Altman’s comment was that it is “mildly embarrassing at best.”

It was an offhanded comment that came to most as a surprise. After all, most of us are wildly impressed with the current version of ChatGPT — based on GPT-4 — and what it is capable of doing. Far from an embarrassment.

But the big hint came when Altman mentioned that “GPT-5 or whatever we call that, it’s just going to be smarter.”

Unlike Tesla’s full self-driving, which is a generic name to describe what the software can do, OpenAI’s GPT stands for a specific kind of AI architecture.

GPT is for generative pre-trained transformer. It is the underlying technology that enables large language models to learn from massive amounts of data and provide synthesized responses in natural language.

Altman said GPT-5 “is going to be smarter” than the current version (which he called embarrassing)…

He also used the phrasing “or whatever we call it,” which tells us that the architecture has changed.

The technical “GPT” name is no longer completely accurate because of a change in architecture, likely needing a new, more accurate name… much in the same way that Tesla’s release of 11.4.1 deserved more than just a “point” release. It wasn’t perfect, but it was an inflection point that led to something extraordinary. Something that is now called full self-driving (FSD).

The discussion was also the first time that Altman mentioned GPT-6 and that “GPT-6 is going to be a lot smarter than GPT-5.”

Of course, we should expect a different name. But in the tech world, this tells us that GPT-5 — or whatever it’s called — is nearing release. And this also means that GPT-6 — or whatever it will be called — is already in the laboratory.

When asked about the costs to building these new AI models, Altman’s response will be perceived as irresponsible to some:

“Whether we burn $500 million a year or $5 billion or $50 billion a year, I don’t care.”

Outside of Silicon Valley, that kind of attitude would probably result in a CEO losing their job, as it would be considered completely irresponsible and likely to bankrupt a company.

But considering OpenAI, its breakthroughs, and that it has already hit an annualized revenue run rate of $2 billion, he can get away with a comment like that.

And venture capital and private equity firms around the world are salivating at the opportunity to give OpenAI as much money as they ask for.

Why? Because they believe Altman and his team will build artificial general intelligence (AGI).

He said as much:

“We’re makin’ AGI, it’s gonna be expensive, it’s totally worth it.”

We may not yet know what the new architecture is. But it is going to be a major leap in performance compared to GPT-4.

And it may very well be that major architectural change that leads to AGI.

Either way, I believe we’re just a month or two away from its release.

And as for the costs involved, I agree. AGI will definitely be worth it.

We always welcome your feedback. We read every email and address the most common comments and questions in the Friday AMA. Please write to us here.